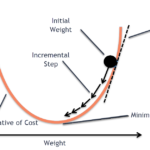

Stochastic Gradient Descent (SGD) is a fundamental optimization algorithm that has become the backbone of modern machine learning, particularly in training deep neural networks. Let’s dive deep into how it works, its advantages, and why it’s so widely used. The Core Concept At its heart, SGD is an optimization technique that helps find the minimum […]

Articles Tagged: sgd

Latest Articles

- From CSV to Client: Using Python to Build and Clean B2B Contact Databases

- 10 Best Docker Container Monitoring Tools for DevOps Teams

- Beyond Coding: How to Build Prototypes Faster with AI and Revolutionize Development

- I Just Learned Python: How to Actually Get Hired (Without the Stress)

- How Python Developers Discover Technical Content in 2025: The Rise of Independent Blogs

Tags

Python is a beautiful language.